When Cheaper Tokens Lead to Bigger Bills

There is a comforting story that the AI industry tells itself: model prices are falling, competition is heating up, and inference is becoming a commodity. Like most comforting stories, it is true in a narrow sense and misleading in a broader one.

Per-token prices have indeed fallen dramatically since the early days of GPT 3. But if you zoom out from the unit price and look at what organizations are actually spending to get meaningful work out of AI, the picture reverses. Total costs are rising, and for good reason. The tasks we ask AI to perform have grown so much larger, so much more complex, and so much more valuable that the savings on individual tokens are overwhelmed many times over. On top of that, the latest generation of frontier models has stopped getting cheaper, and the next generation will be significantly more expensive.

This creates a layered challenge for any organization planning its AI investments. The economics of tokens, the economics of agent-driven workflows, and the economics of infrastructure at scale are all moving in directions that reward careful planning and punish assumptions built on the idea that AI will simply get cheaper over time. Each of these layers compounds the others, and understanding how they interact is essential for making sound decisions about where, how, and how much to invest.

Tokenomics and Growing Expectations

The price of a token is the atomic unit of AI economics, the smallest piece of the cost puzzle. But just as understanding the price of a brick tells you very little about the cost of building a house, understanding token prices in isolation misses almost everything that matters about what organizations are actually paying. This section traces how token economics have evolved, why the headline numbers are misleading, and what the real cost drivers look like today.

The end of the price decline

For several years, each new generation of frontier models arrived with a lower price tag. GPT 4 was cheaper per token than GPT 3.5 at equivalent capability. Claude 3 was cheaper than Claude 2. The pattern trained the market to expect that waiting would always be rewarded with better economics.

That pattern has broken. Claude Opus 4.6 and GPT 5.4, the latest flagship models from Anthropic and OpenAI, both held their pricing steady relative to their predecessors. Google went further with Gemini 3, actually raising prices compared to Gemini 2. At the frontier, we have bounced off the bottom.

The trajectory ahead points upward. Both OpenAI and Anthropic have teased upcoming models, codenamed Spud and Mythos respectively, that represent a genuinely new class. Anthropic has already published pricing for Mythos: $25 per million input tokens and $125 per million output tokens. To put that in perspective, Claude Opus 4.6, currently Anthropic’s most capable publicly available model, costs $5 per million input tokens and $25 per million output tokens. Mythos is five times more expensive across the board.

Consider what this means in practice. Mythos is purpose-built for complex work like large-scale software development, and by all accounts it represents a genuine leap in capability. Organizations that can afford to deploy it will gain access to an AI that can take on substantially more ambitious tasks with higher reliability. Organizations that cannot afford it will be working with the previous generation’s capabilities, watching the gap widen with each passing quarter. This is the beginning of a meaningful bifurcation in the market, where the cost of staying at the frontier begins to separate the organizations that can keep up from those that fall behind.

Context windows changed everything

Even setting aside where prices are headed, the idea that AI has gotten cheaper deserves scrutiny. The reason is subtle but profound: the amount of work that a single API call can perform has changed by orders of magnitude, and that change dwarfs any per-token savings.

When GPT 3 first became widely available through the API, the maximum context window was 4,096 tokens, input and output combined. That was the entire universe of a single request. Today, Claude Opus 4.6 offers a context window of one million tokens. Some models, like Llama 4, offer ten million.

Think about what that means for the cost of a single API call. Even if the price per token dropped by 90%, a model that processes 250 times more tokens per request is still dramatically more expensive per call. The growth in context window size has far outstripped the reduction in per-token pricing, which means the actual cost of getting a response from a frontier model today can be vastly higher than it was when token prices were nominally much more expensive.

This is an important shift in how to think about AI economics. The relevant metric for budgeting is the cost per completed task, and that number looks nothing like the per-token price would suggest.

Agents and the multiplication of everything

The context window story becomes even more consequential when you consider how organizations are actually using these models today. The era of direct API calls, where a developer sends a single prompt and receives a single response, is fading. In its place, agents have become the dominant pattern for getting real work out of AI.

Agents are, to put it simply, token-hungry. An agent working on a complex task may make dozens (or hundreds) of API calls, each one potentially filling a substantial portion of the context window. These agents orchestrate multi-step workflows, call multiple models, integrate with external tools, and carry forward growing context as they work through a problem. The model itself is the base unit of compute at the bottom of these systems, but the scaffolding around it multiplies that base cost dramatically.

The shift in expectations has been staggering. When ChatGPT first launched, people were pasting a paragraph of text and operating on it one paragraph at a time. That was the scope of what felt possible. Today, agents work across massive codebases, synthesize data from hundreds of sources, perform deep research and analytics, and execute long-running tasks that would take a human team days or weeks. Our ambitions have grown by several orders of magnitude, and our token consumption has grown to match.

All of these forces, stabilizing per-token prices, expanding context windows, and agent-driven workflows, converge into what we might call the real tokenomics of enterprise AI. The sticker price per token is almost irrelevant. What matters is how many tokens you burn to complete the work you actually need done, and that number is climbing steadily.

This naturally raises a question: if AI is getting more expensive to use in practice, why are organizations choosing to spend more? The answer lies in the relationship between cost and productivity.

The Logic of Spending More

Understanding the cost dynamics is necessary, but it only tells half the story. The more important question for decision-makers is when and why spending more on AI is the right call. The economics of agentic AI follow a logic that, once you see it clearly, explains both why budgets are growing and why they should be.

When is an agent actually worth it?

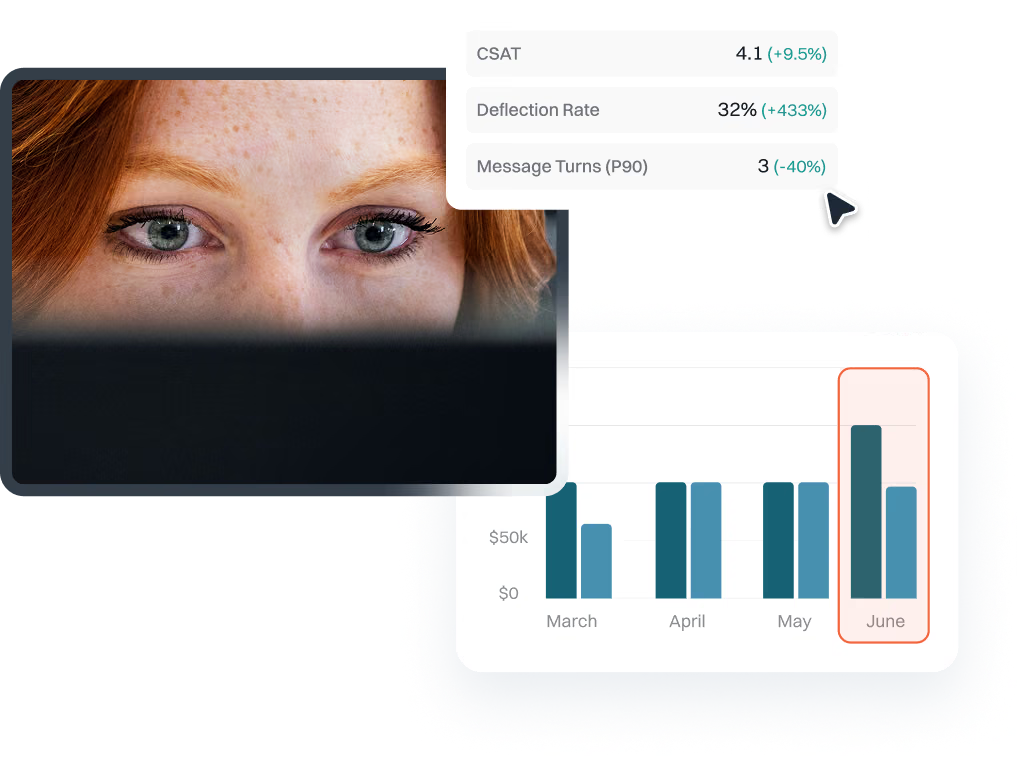

There is a straightforward way to think about whether an agent is genuinely improving productivity, and it comes down to a simple relationship between three things: how likely the agent is to succeed, how long it takes a human to check the result, and how long it would take a human to just do the task themselves.

An agent is adding value when the probability of it accomplishing its objective is greater than the ratio of verification time to task performance time. In more concrete terms, imagine you have a task that would take a human two hours to complete. If it takes that human ten minutes to review the agent’s output and confirm whether it succeeded, the verification-to-performance ratio is about 0.08. The agent only needs to succeed roughly 8% of the time to be a net positive. For tasks where verification is fast relative to execution, even agents with modest reliability are well worth using.

This is why organizations are gravitating toward tasks where AI can tackle complex, time-consuming work while humans retain a lightweight review role. The bigger the gap between “how long it takes to do” and “how long it takes to check,” the more forgiving the math becomes.

The environment matters

This equation holds cleanly in one important condition: that when an agent fails, nothing is worse off than when it started. Consider a document processing scenario where an agent is working through a large volume of files. If the results come back poorly, a human can simply discard them and start over. The environment is unchanged. The cost of failure is just the original cost of doing the work, no more.

Now consider a customer service agent that handles a live conversation. If it provides a customer with incorrect information, it has damaged the relationship. The effort required to recover, correcting the misinformation, rebuilding trust, managing potential escalation, can far exceed the effort of handling the interaction from scratch. In these scenarios, the cost of failure is higher than the cost of the original task, which means the agent needs to clear a significantly higher bar of reliability before deployment makes sense.

Organizations evaluating where to deploy agents should think carefully along this dimension. Tasks with high execution time, fast verification, and minimal environmental impact from failure are the sweet spot. Tasks where failure is visible to customers or alters shared state require much higher confidence before the economics work out.

Why more spending improves the equation

Once you see this framework, the market’s pull toward more expensive, more capable models starts to make perfect sense. There are two ways to improve the productivity equation: increase the probability that the agent succeeds, and decrease the time humans spend verifying results. More capable models do both. They produce higher-quality outputs that succeed more often and require less scrutiny when they do.

This is the engine driving adoption of frontier models despite their rising costs. If a more expensive model reduces verification time from ten minutes to two minutes while also improving success rates, the productivity gains can far outweigh the additional token spend. The value of the work being displaced, the human hours no longer needed, is the real number that justifies the investment.

Smaller open-weight models have improved tremendously and continue to push the boundaries of what’s possible at lower price points. For straightforward tasks like classification, even models you can run on a local machine perform admirably, and in many cases you could handle those tasks without an LLM at all. But the high-value work, the complex multi-step tasks where agents are displacing significant human effort, continues to pull organizations toward the frontier. Each improvement in model capability reveals new tasks that could be automated, which creates a natural escalator: better models lead to more ambitious deployments, which demand even better models.

The cumulative effect is that AI budgets grow even as per-token prices stabilize, because the volume and ambition of what organizations are doing with AI keeps expanding. And at a certain scale, this expanding footprint collides with a different kind of constraint entirely.

The Capacity Cliff

So far, the cost story has been about tokens, tasks, and the economics of agent productivity. But for organizations whose AI consumption has grown large enough, a fundamentally different set of problems emerges. These problems have less to do with what models cost and more to do with whether you can access them at all.

When shared capacity stops working

Most organizations start on shared capacity. You pay per token through a provider’s API, and the provider manages the underlying infrastructure. This works well at moderate volumes. The breaking point typically arrives when an organization crosses roughly three million dollars per year in AI spend concentrated through a single provider. At that threshold, shared infrastructure starts producing timeouts, rate limits, and capacity shortages that directly impact production workloads.

The shift this forces is significant. Provisioned capacity means renting a dedicated bank of GPUs from a cloud provider, essentially reserving a portion of the infrastructure for your exclusive use. If you own your own hardware, the math is functionally the same: instead of paying a cloud provider, you’re paying to maintain, power, and cool the machines yourself. Either way, you move from paying for what you use to paying for what you have, and that shift from variable to fixed costs introduces a cascade of new planning challenges.

Managing peak demand

Imagine you have a fixed pool of GPUs serving your organization’s AI workloads, and twenty different agents are running against that pool. How much capacity is each one consuming? What does that make the effective cost per agent? These questions seem simple, but they are surprisingly difficult to answer without specialized tooling.

Peak demand makes everything harder. If several agents experience high usage at the same time, they can collectively exceed the provisioned capacity, causing exactly the kind of shortfalls that provisioning was supposed to prevent. You’re left choosing between two imperfect strategies. You can provision enough capacity to handle your highest expected peak, which means paying for resources that sit idle most of the time. Or you can maintain a smaller provisioned pool and allow excess demand to spill over into shared capacity at per-token prices.

The spillover approach sounds reasonable until you consider the operational reality. You’re now managing two entirely different pricing models for the same workloads. A single agent’s execution can span both provisioned and shared capacity within one task. Attributing costs accurately, understanding your true unit economics, and making informed tradeoffs becomes extraordinarily difficult.

Purchasing decisions with no obvious answers

Layered on top of the technical challenges are commercial ones. Every hyperscaler offers a different menu of provisioned capacity SKUs with distinct pricing, performance characteristics, and commitment requirements. Favorable pricing typically requires committing for six months to a year, which means forecasting your capacity needs far in advance in a domain where usage patterns are evolving rapidly.

The strategic questions get thorny fast. Is it better to lock into provisioned capacity on AWS for Anthropic models through Bedrock, or to spread your workload across multiple providers’ shared capacity for the same models? Provisioning gives you guaranteed throughput but ties you to one cloud’s pricing and availability. Distributing gives you resilience and optionality but sacrifices the performance guarantees you’re provisioning to get in the first place. There is no universal right answer, and the variables shift as providers change their offerings and your own usage evolves.

The cliff and the siloing trap

Perhaps the most treacherous property of provisioned capacity is that problems arrive suddenly. Everything runs smoothly right up until it doesn’t. Adding one more agent, onboarding one more team, or launching one more initiative against an already-loaded GPU bank can push the system past a tipping point. The degradation is not gradual. It falls off a cliff, and it tends to happen at the worst possible moment, precisely when you’re scaling up and can least afford disruption.

This cliff dynamic leads many teams to a defensive strategy: provisioning a small, isolated pool of capacity for each individual use case. If each agent or team has its own dedicated GPUs, one workload can’t starve another. The problem is that this approach sacrifices the entire economic rationale for provisioned capacity. Shared pools are efficient because diverse workloads with different peak times can share resources. When you silo each use case into its own pool, every pool carries its own idle capacity overhead, and the total cost can exceed what you would have spent on shared capacity.

Tools like Pay-i exist to help organizations navigate this tangle, providing visibility into per-agent costs, real-time capacity utilization, and the ability to model different provisioning strategies before committing capital. For organizations operating at this scale, the infrastructure planning challenge deserves as much attention as model selection or prompt engineering.

Putting It Together

The economics of enterprise AI are moving in a direction that demands a different kind of planning than most organizations are accustomed to. Token prices at the frontier have stopped falling and are poised to rise with the next generation of models. The shift toward agentic workflows has made per-token pricing a poor proxy for actual costs. And the infrastructure decisions required at scale introduce fixed commitments and capacity planning challenges that most teams have limited experience managing.

What ties these threads together is a single underlying dynamic: the cost of AI productivity is rising because the value of AI productivity is rising. Organizations are spending more because the work AI can now do is genuinely worth more, and the ones that plan deliberately for this reality will be far better positioned than those still waiting for the sticker price to come down.